Don't Use a Scalpel to Peel an Apple

In the operating room, a surgeon holds a scalpel, cutting through layers of skin with precision. One millimeter off and you sever an artery. Hit the right spot and you save a life.

In the kitchen, a chef holds a paring knife, peeling skin from fruit. Quick. Simple. No millimeter precision needed.

Both are knives. Both cut. But would you use a scalpel to peel an apple?

Nobody does that. Because it's wasteful. Because it's unnecessary. Because a $2 paring knife does the same job as a $200 surgical blade.

Yet in the software world, I see people doing this every day.

"I want to use an AI Agent"

Last Tuesday. Design review meeting.

Minh - a fairly senior dev on our team - was presenting his solution for what seemed like a simple task: every morning, aggregate sprint metrics from Jira, get CI/CD status from Jenkins, then post a summary to Google Chat for the team.

"I'll use an AI Agent," Minh said confidently.

I raised an eyebrow. "AI Agent?"

"Yes. The Agent will read data from various sources, analyze whether the sprint is healthy, then write a summary with intelligent insights. Like a bot that can think."

Sounds great. Very "2026". The whole team nodded along.

But something felt off.

"Do the metrics change?" I asked. "Today velocity, tomorrow cycle time?"

"No. Always: tickets done, tickets in progress, blocked tickets, build status."

"Does the aggregation method change?"

"No. Always just count and list."

"So... why do you need an AI Agent?"

Silence.

Minh looked at me. I looked at Minh. The whole team looked at each other.

That was the moment I realized: Minh was holding a scalpel to peel an apple.

When scalpels become trendy

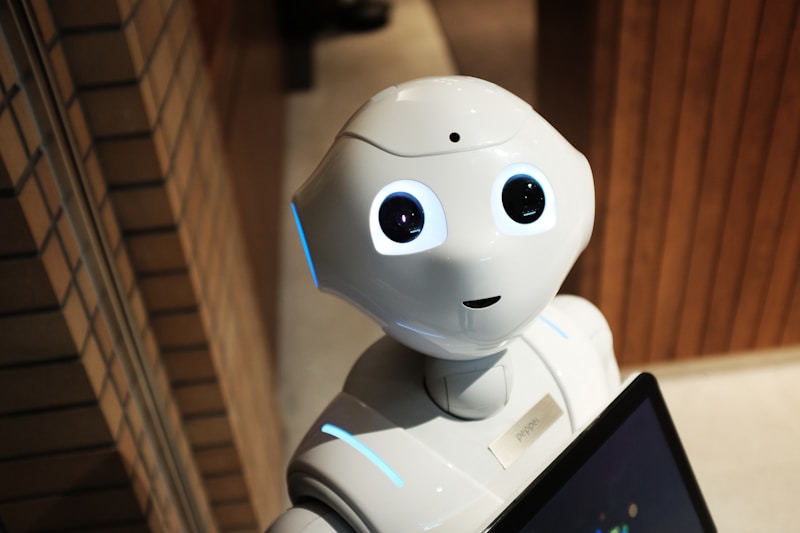

Ever since ChatGPT exploded, "AI Agent" has become a magic keyword. Every proposal tries to squeeze AI in. Every solution has the word "intelligent" or "autonomous."

I understand this mindset. Fear of being left behind.

If you don't use AI, you seem to be coding "old-school." If you're still writing cron jobs like 10 years ago, you seem behind the trends. In interviews, saying "I wrote cron jobs" sounds far less sexy than "I built AI Agents."

But what I've observed is the complete opposite.

The strongest teams aren't the ones using the most AI. They're the ones who know when NOT to use AI.

A cron job that queries Jira API and Jenkins API every morning, formats into a fixed message template, posts to Chat - there's no reason to call an LLM at all.

Higher cost. Higher latency. Non-deterministic output - today it writes one way, tomorrow another. And debugging... good luck.

Three stories about knives

To understand the boundary between AI Agent and cron job, let me tell three real stories from our team.

Story 1: An and the right scalpel

An is our QC lead. She had a pain point: every week she had to read hundreds of bug reports from users to classify severity and assign to the right team.

An proposed: "How about we build an AI Agent to auto-classify bugs?"

Makes sense. Users report bugs in natural language, everyone expresses differently. Someone writes "app crashes when I tap the payment button," someone else writes "I can't buy anything." Agent reads, understands intent, classifies severity, assigns to the right team.

Team spent 3 weeks building. LangChain, prompt engineering, evaluation sets, a complete system.

Result? Worked beautifully.

92% accuracy. Classification time dropped from 2 hours/week to 15 minutes of review. An happy. Team happy.

This is using a scalpel for surgery. Right tool, right job.

Story 2: Minh and the scalpel peeling apples

Back to Minh and his sprint metrics bot.

After the review, Minh rewrote it as a cron job. Python script, 80 lines. Runs every morning at 6am. Queries Jira API, queries Jenkins API, formats into a fixed message template, posts to Google Chat.

No LLM. No Agent. No "intelligent."

Result? Running stable for 4 months now. No bugs. No maintenance needed. Cost nearly zero.

What if Minh had used an AI Agent as originally planned?

Daily LLM costs. Inconsistent output - today it writes "Sprint is healthy," tomorrow "Team is doing well," next day "Velocity is stable." Same data but different messages, making it hard for the team to compare trends.

This is almost using a scalpel to peel an apple. Good thing he stopped in time.

Story 3: Nhi and two knives in the right places

Nhi is the tech lead for our platform team. She had a more complex problem: processing e-commerce orders.

Pipeline has 10 steps: validate input, check inventory, calculate price, classify special customer requests, apply discount, update database, generate invoice, write response email, send notification, log audit.

Nhi didn't pick one tool for everything. She broke it down and chose the right knife for each task.

First 8 steps? Cron job and traditional code. Validate input with if/else. Check inventory with database queries. Calculate price with fixed formulas. Deterministic. Testable. Reliable.

Remaining 2 steps? AI Agent.

Classify special customer requests - because customers write in natural language, everyone different. "Deliver before 5pm please," "Ship to my mom's place in the countryside," "Package carefully, it's a gift." Can't write if/else for all of these.

Write personalized response emails - because each order needs a different email based on context, not a rigid template.

Result? Pipeline runs smoothly. AI costs focused only on the 2 nodes that actually need it. The other 8 nodes are cheap, fast, and easy to debug.

This is knowing when you need a scalpel, when you need a paring knife.

So where's the boundary?

After wrestling with this question many times, I realized the boundary isn't about task complexity. Not about the number of data sources. Not about whether the task is "important."

The boundary is about the nature of ambiguity.

I use a simple test: "Can I write step-by-step instructions that anyone could follow correctly?"

For Minh's task, I could write:

- 1.Call Jira API, get ticket count where status = "Done"

- 2.Call Jira API, get ticket count where status = "In Progress"

- 3.Call Jenkins API, get status of last 3 builds

- 4.Format as template: "Done: X, In Progress: Y, Build: Z"

- 5.Post to Google Chat channel #team-daily

An intern who knows nothing about the project could read these 5 steps and do it 100% correctly. No thinking needed. No judgment required. Just follow the steps. That's a cron job's work.

An's task is different. Try writing instructions:

- 1.Read bug report from user

- 2.Understand what problem they're facing

- 3.Assess severity level

- 4.Assign to the appropriate team

Steps 2, 3, 4 all require understanding context. User writes "app doesn't work" - is it crashing? Loading slowly? UI bug? Every report is different. Can't list all possible cases. That's AI Agent's work.

The thing few mention: When the scalpel cuts wrong

There's one factor that AI blog posts rarely discuss: the cost when the system fails.

When a cron job fails, you know where it failed. The flow is deterministic. Check logs, trace, fix. Might take a few hours, but you know the path.

When an AI Agent fails, sometimes you don't know why it failed. The same input can produce different outputs each run. Debugging a non-deterministic system is much harder.

Last week the payment team had an incident. An AI Agent classifying transactions suddenly flagged some as "suspicious" when nothing was abnormal. Finding the cause took 2 days because there were no clear logs explaining why the model decided that way.

If it had been a rule-based system with if/else, debugging would have taken maybe 2 hours.

So the question isn't just "Does this task need AI?" but also:

"If AI fails on this task, what are the consequences, and can we detect it?"

If consequences are severe and hard to detect - like calculating the wrong amount on an invoice - then even if the task seems complex, I lean toward hard logic with clear validation.

Jobs for the paring knife

On our team, there are many tasks where cron jobs beat AI Agents:

Database backup every night - dump, compress, upload to S3, delete old backups. Completely deterministic logic.

Rotate log files and clean disk - check disk space, compress old files, delete expired ones. All if/else.

Service health checks - ping endpoints every 5 minutes, 3 consecutive fails triggers restart and alert. Simple thresholds.

Sync data between two systems - pull from CRM, map fields, insert/update. Data changes but processing method doesn't.

Generate test coverage reports - run coverage tool, aggregate numbers, render HTML, send email. Input is numbers, output is tables.

Clean old Git branches - scan repos, find branches merged over 30 days ago, delete. Clear rules.

These tasks run daily, weekly, monthly. Silently. Stably. No "intelligence" needed. And that's exactly their strength.

Jobs for the scalpel

Conversely, there are tasks only AI Agents can handle:

Automated code review - every PR has different code, different context. Agent reads diffs, understands intent, suggests about logic and design smells. Linters only catch syntax.

Root cause analysis from logs - during production incidents, logs are long and messy. Agent reads thousands of lines, correlates between services, finds abnormal patterns.

Answering questions from internal knowledge base - new dev asks "what protocol does service A use to call service B?" Agent searches wiki, Confluence, Slack history and answers with specific context.

Assessing impact of database schema changes - when altering tables, Agent analyzes codebase to see which queries depend on this schema, assesses risk.

Analyzing security vulnerabilities - when Dependabot reports a CVE, Agent reads the description and checks if our codebase actually uses the affected function.

These tasks require understanding semantics, not just processing data. Each different input needs different processing. Can't write all the if/else.

Pattern: Hybrid done right

Most good production systems will be hybrid - like Nhi's pipeline. But "hybrid" doesn't mean mixing randomly.

How I think about it: envision the pipeline as a series of steps. At each step, ask: "Does this step require reasoning?"

If yes → AI Agent.

If no → Cron job / traditional code.

Don't turn the whole pipeline into one "mega agent." Expensive. Slow. Hard to debug. And usually unnecessary.

Closing thoughts

Back to the review meeting with Minh.

After discussing, Minh decided to write a cron job. 80 lines of Python. Runs at 6am. No drama.

A month later, Minh proposed using an AI Agent again - but this time for something else: automatically summarizing and categorizing feedback from user surveys. Every survey response is written differently. Different sentiments. Different topics.

This time the team approved immediately. Because that was the right job for the right tool.

A scalpel isn't better than a paring knife. A paring knife isn't better than a scalpel. They're good at different things.

AI Agent is a powerful tool. But the most powerful tool isn't the one that can be used everywhere - it's the one used in the right place.

Next time you're about to propose using an AI Agent, pause for a second and ask yourself:

"I'm holding a scalpel. But does this job... actually need surgery?"

Sometimes the simplest answer is the right answer.